Monday - October 14 - Four Good Reads

UBI experiment, caregiving, history-as-experiment, AI board member hypes AI

Effect of Cash Benefits on Health Care Utilization and Health

A funny thing happened recently. A few studies were published on or nearly on the same day, each examining the effect of universal basic income (UBI). One, a set of NBER working papers funded by Open AI and conducted by a very strong team of researchers - finding little payoff or effect of UBI - lit the media world by storm.

The other is the paper we’re looking at here!

This study examined the impact of UBI on fundamental health outcomes. Below is the setup of the study:

I guess that Chelsea’s UBI experiment was in response to getting hit hard by the pandemic:

Only families below 30% of the median income of greater Boston were eligible.

The researchers examined medical records from a period prior to the UBI experiment to a month after its completion.

The authors pre-specified a focus on emergency room visits as the main outcome, and a variety of health behaviors and outcomes that would lead to emergency room visits as secondary outcomes.

The big finding:

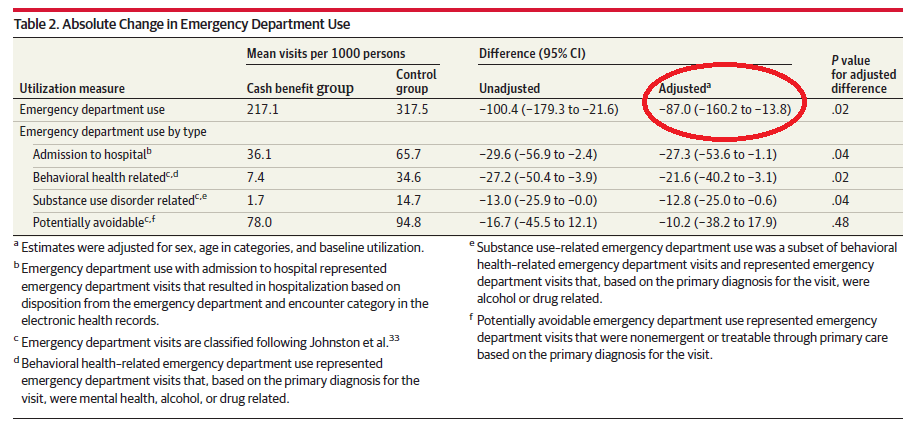

About 90 fewer emergency room visits per 1,000 persons in the group that got UBI.

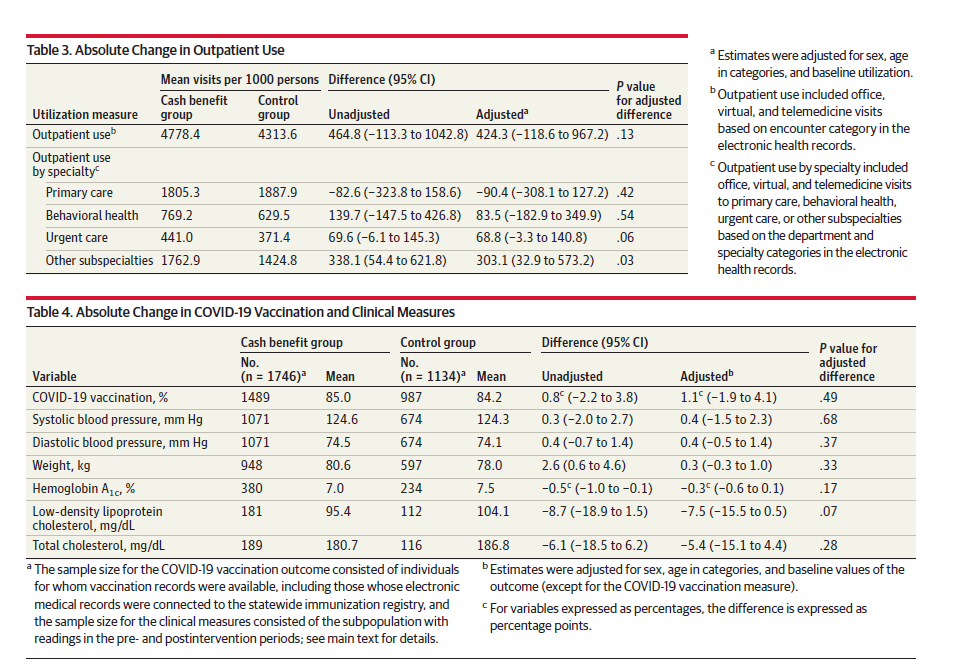

That said, there weren’t any significant group differences in any of the secondary outcomes - outpatient care, vaccination, or a bunch of health measures:

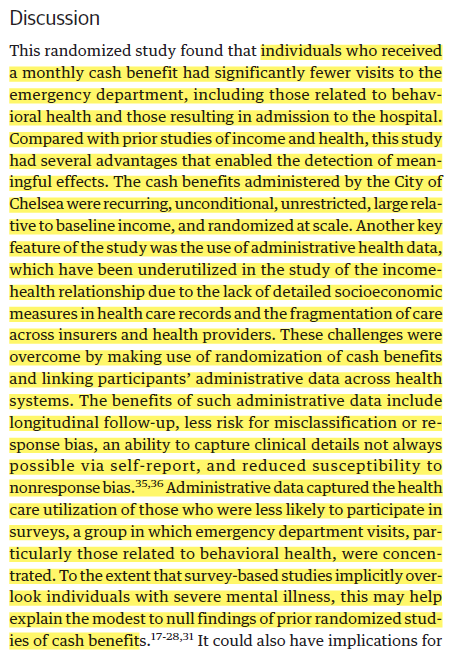

Their overall finding:

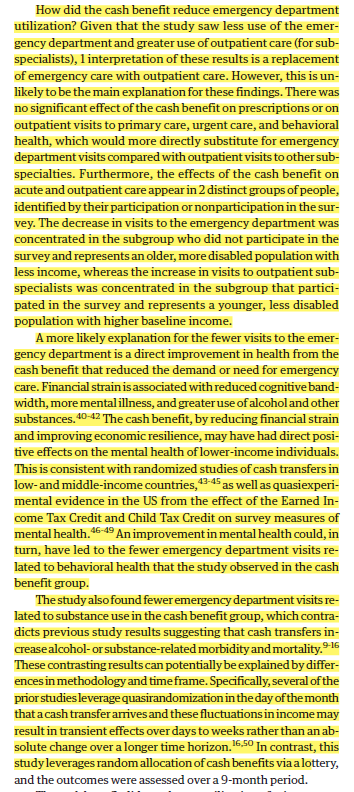

Their explanation of the likely mechanism:

Unfortunately, I’m a bit skeptical of these proposed mechanisms. The other UBI studies released at the same time examined a few of these and found little effect. I’m also skeptical that a short run experiment like this could profoundly reshape folks’ behaviors.

It’s important to remember that the person writing this blog is, at his core, a curmudgeonly sociologist. And so when I see things like UBI experiments, I often think of the process of policy experimentation as an expression of governance that wishes to minimize the cost and responsibility the state has towards its citizenry. I also suspect that people aren’t idiots and realize that these short run experiments will end. So whatever effects a durable UBI would produce would be different than an obvious temporary experiment. Nevertheless, interesting findings.

Dynamics of Later-Life Caregiving and Health. Insights From Biomarker Data and Cognitive Tests

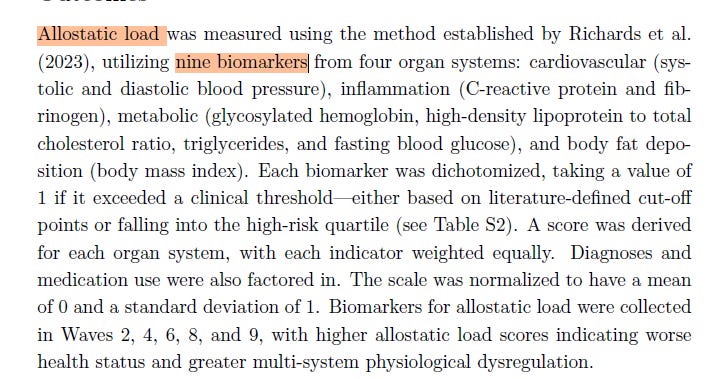

I’m a big fan of Prag’s work. What we have here is a working paper that uses longitudinal data from England to examine the effects of providing unpaid care to others among a sample of older adults, aged 50 and over.

Their study’s justification and main research question:

They lay out reasons to expect caregiving to improve and worsen health among caregivers. They also lay out reasons to focus on intensive and nonintensive caregiving, and to think about different dynamics for joining and leaving caregiving.

Their main “treatment” is unpaid caregiving to another person, varied by time intensive (8+ hours / week) and non-time intensive.

Their main health outcomes:

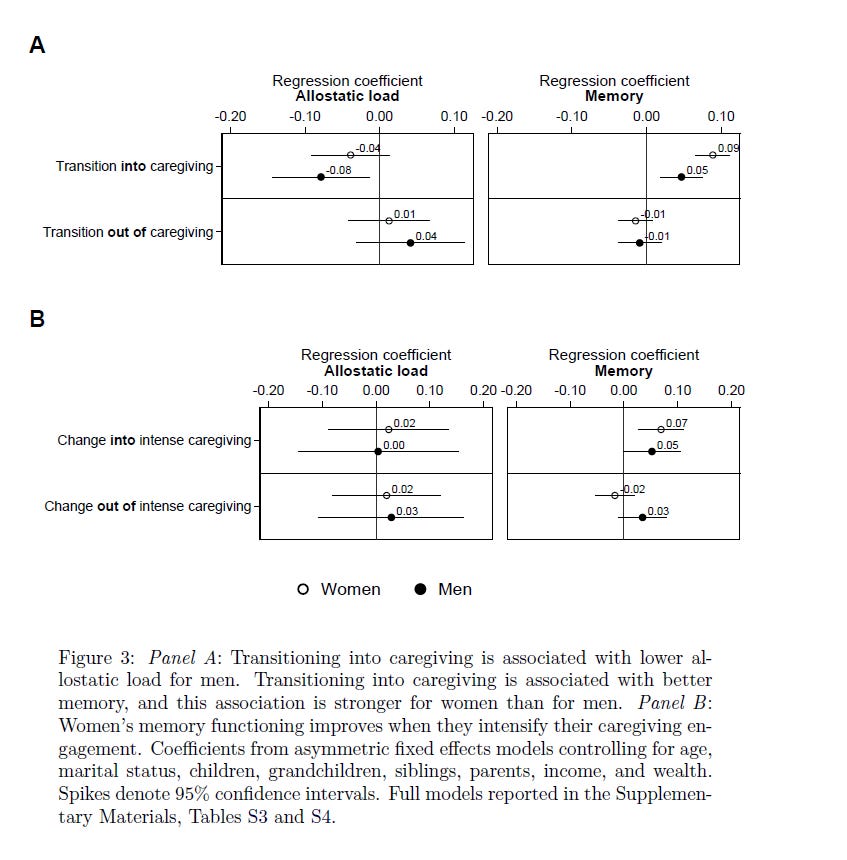

Their main finding - more effects among those entering into caregiving:

their interpretation:

And their main interpretation of findings in the Conclusion

I’m a sucker for asymmetrical fixed effects. They’re a straightforward and cool extension of these kinds of methods. I love the idea of examining uneven effects across entering and leaving. Also, a nice blend of sociological (e.g. relationships, networks) and biological (biomarkers as health outcome) approaches. The downside is that we don’t really get to see the anticipated mechanisms explicitly and thoroughly tested. And while I almost exclusively conduct quantitative research, I often find myself wishing for qualitative interviews and / or ethnographies of the kinds of research questions answered here. I suspect that we could get a rich sense of the mechanisms producing the change if we could see people providing intensive care over a long period of time.

Randomized Control History?

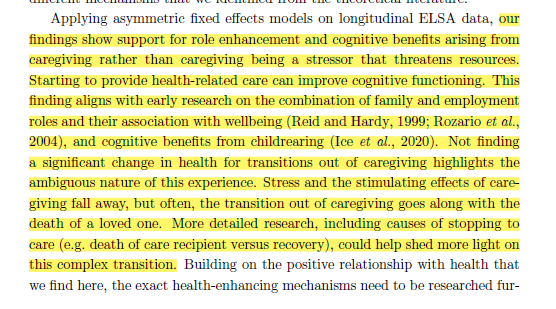

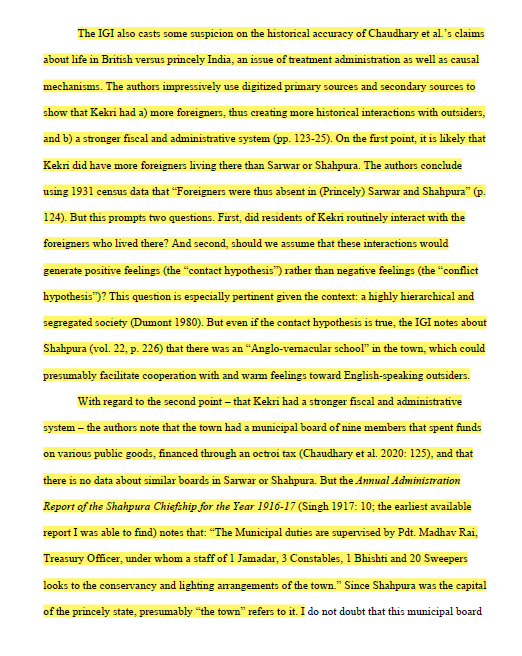

A cool critique of the application of randomized control trial (RTC) logic to the study of historical processes.

The author, Verghese, begins by noting the rise of causal logics and methods in recent decades in the social sciences (here focusing on political science)

This rise includes the study of historical political economy (HPE), with researchers mining history to find randomization and natural experiments to understand the impact of certain state/society/political features on state/society/political outcomes.

Verghese’s big, overall statement:

A fair critique and concern:

Verghese focuses on types of RCT logics applied to historical studies: natural experiments (e.g. some act of god happening to one group of folks, and not to a near identical other group of folks), instrumental variables (finding a condition that affects the treatment and not the outcome, or, only affecting the outcome through the treatment) and matching (making treatment and control groups similar and balanced on a set of observable characteristics).

Verghese walks through a bunch of historical studies of colonization focusing on the Indian case, taking the “historical” part seriously and considering how well the RCT logic holds up. To take a bird’s eye view - RCT doesn’t hold up well. A few examples:

Verghese looks at the underlying realities of the uneven distribution of colonization across India that’s argued as random. Verghese finds it isn’t terribly random:

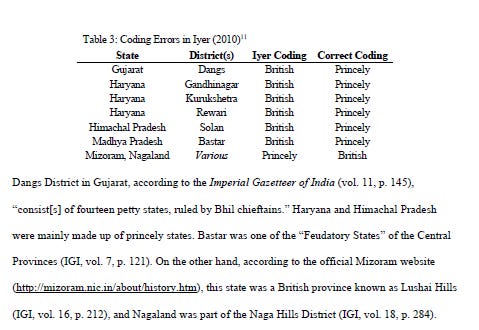

Verghese looks at studies matching different chunks of the Indian subcontinent and finds, at best, debatable descriptions of places, or more likely, coding errors:

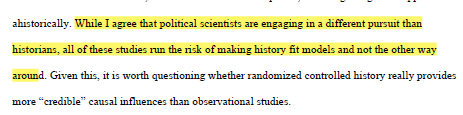

I love this claim in the conclusion:

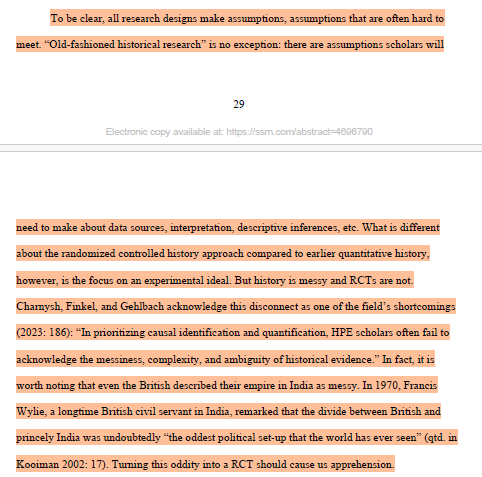

History is messy, RCT is not:

I don’t find the recommendations that end the Conclusion the most helpful - they’re mostly along the lines of “think deeply and correctly about why you want randomization, how you get it, and focus on the fundamentals of the data.” True points, but I believe that the incentives of modern higher education reward those who are fast, sloppy, and on trend over the careful.

Nevertheless, a thought provoking paper that situates the RCT methodological logic against the realities of data. There’s an old joke that statisticians and artists are similar in that both have a tendency to fall in love with their models. A reminder of the need to keep the realities of one’s data as the core pursuit.

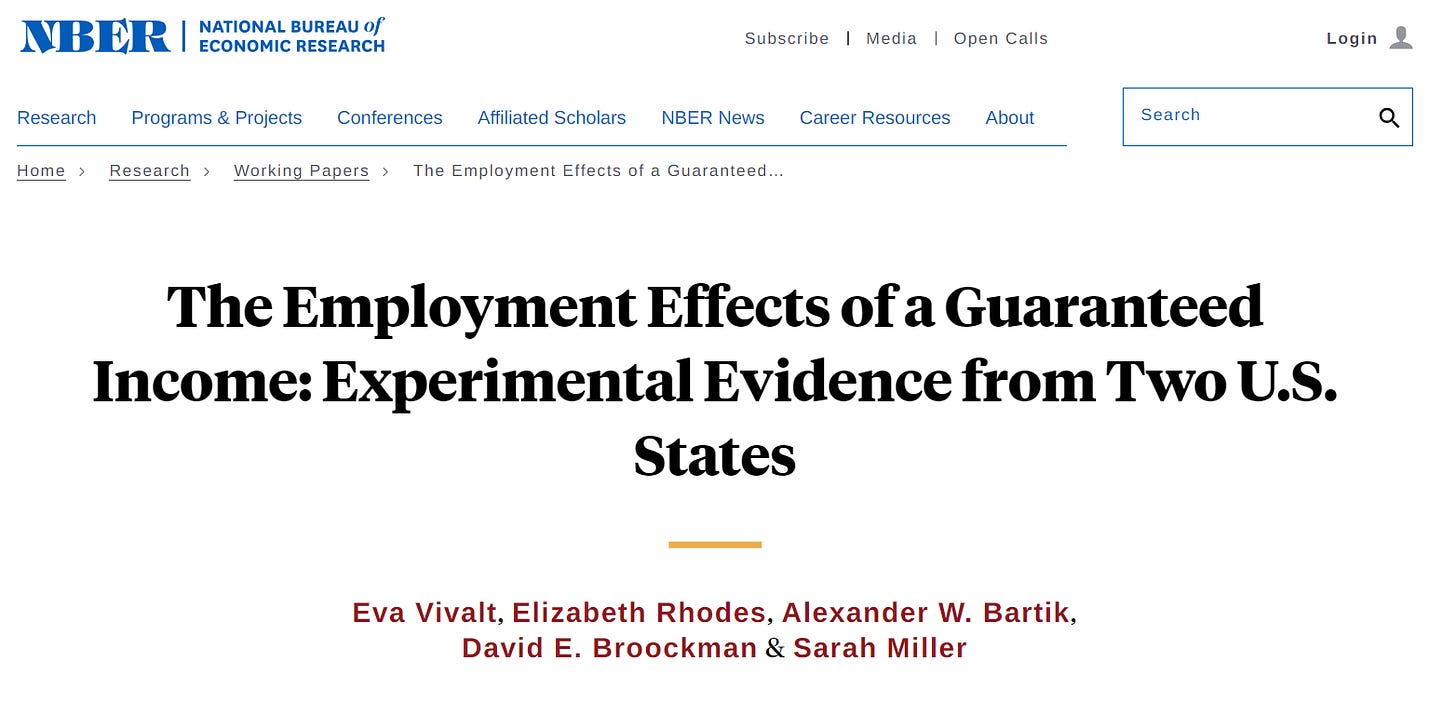

Technological Disruption in the US Labor Market

Interesting new report by some prominent economists - they examine historical technology-induced changes to the economy to get a sense of what AI might do.

I’m going to be honest - the paper’s cool in that it provides a nice longrun examination of major economic trends. They spend a lot of time talking about the insights it could provide regarding AI. But that seems more like an attempt to pump up hype and get noticed (I’ll talk about this a bit below). The data:

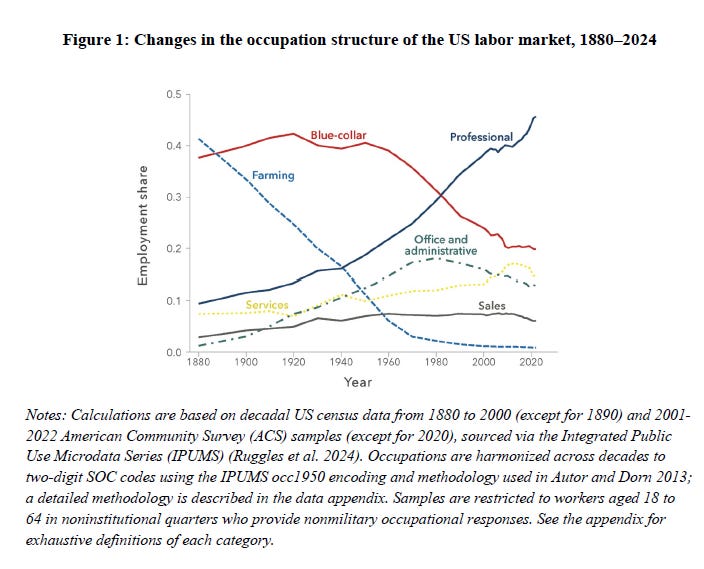

Massive long run changes in the structure of work:

Think of the difference between automation and augmentation - the two have different impacts:

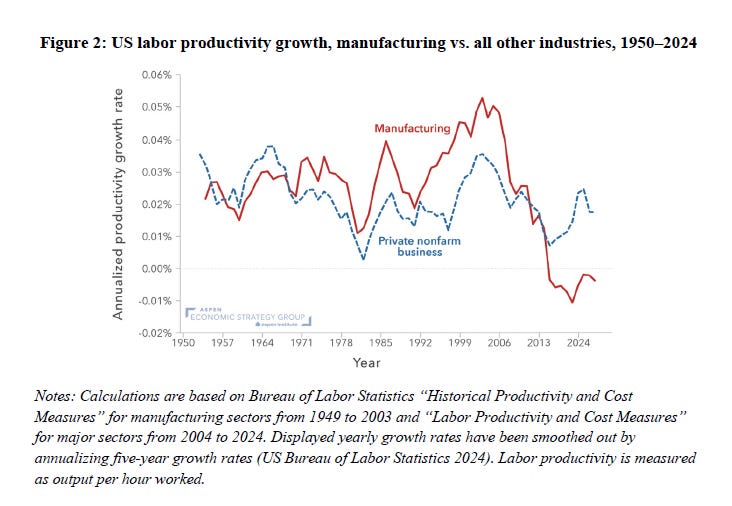

Manufacturing productivity change:

the authors argue

I disagree: it looks like there’s a clear cratering of manufacturing productivity around the time that a massive political swing to reduce US manufacturing took effect (the early 2000s, NAFTA, the China shock, etc.). We see a policy effect, not necessarily a technological effect. But that’s, at minimum, a point up for debate.

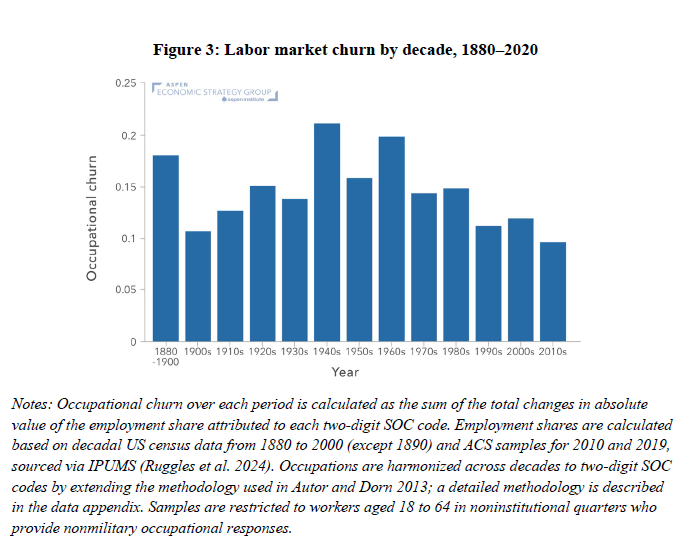

The next look at the concept of “churn”

While the 1940s - 1970s were historically “churn-y,” we have been in an era of low churn from the 1970s onward, compared to earlier history:

This, of course, focuses on occupation churn, not individuals churning in the labor market. Two different focuses.

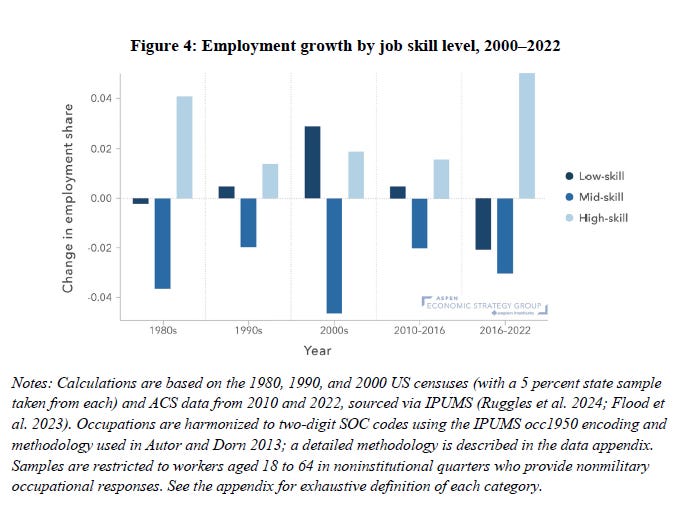

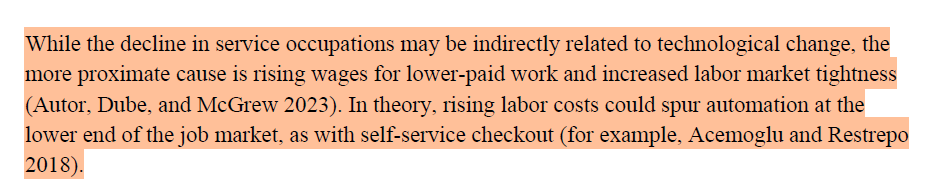

Recently, job polarization has been replaced by skill upgrading:

Their interpretation:

Interesting, but I am a bit skeptical. There are lots of points of discretion to produce these kinds of findings. Nevertheless, let’s say that there’s some evidence of declining polarization and rising upskilling.

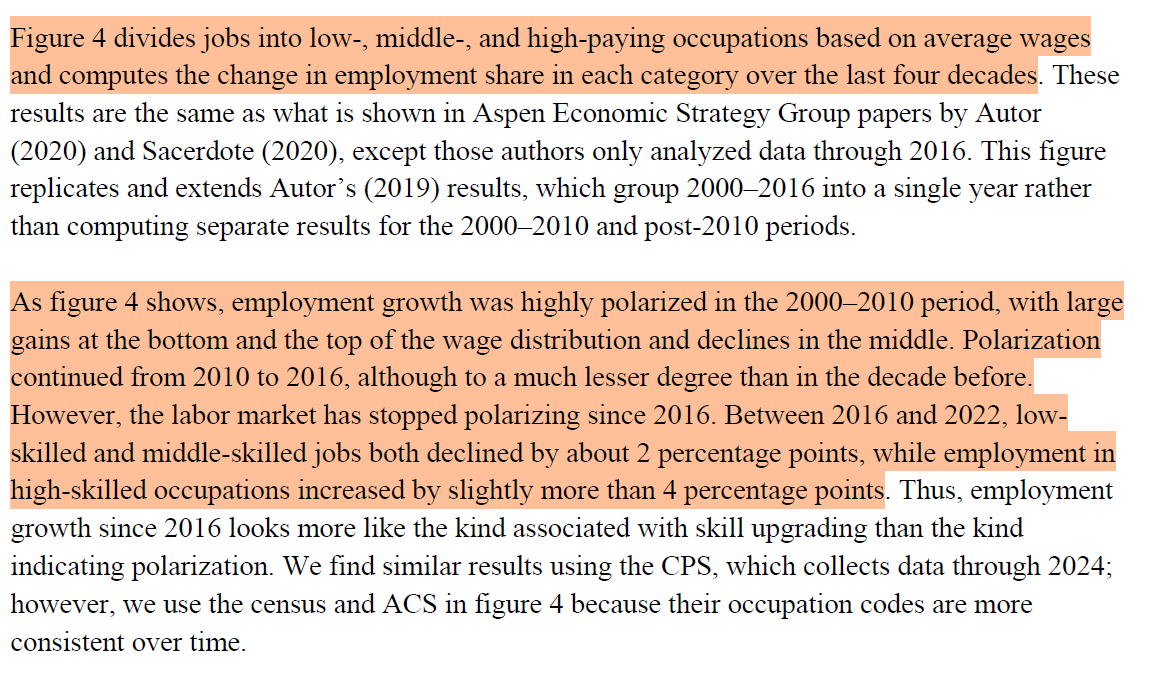

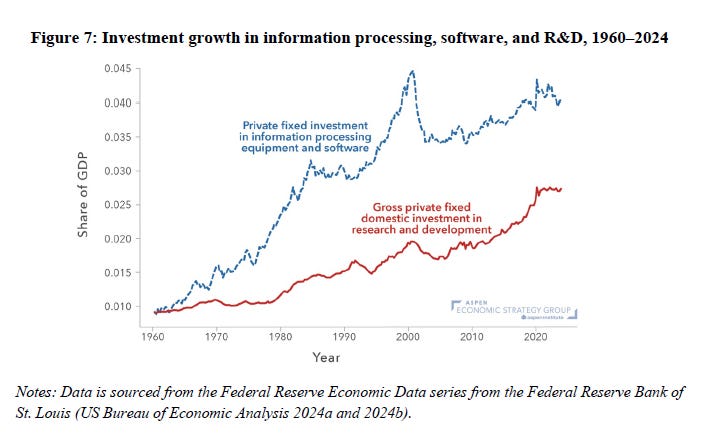

Major declines in lower paid work

the author’s interpretations:

And a massive rise in STEM jobs, partially obscured by a rise in STEM-my business and management jobs:

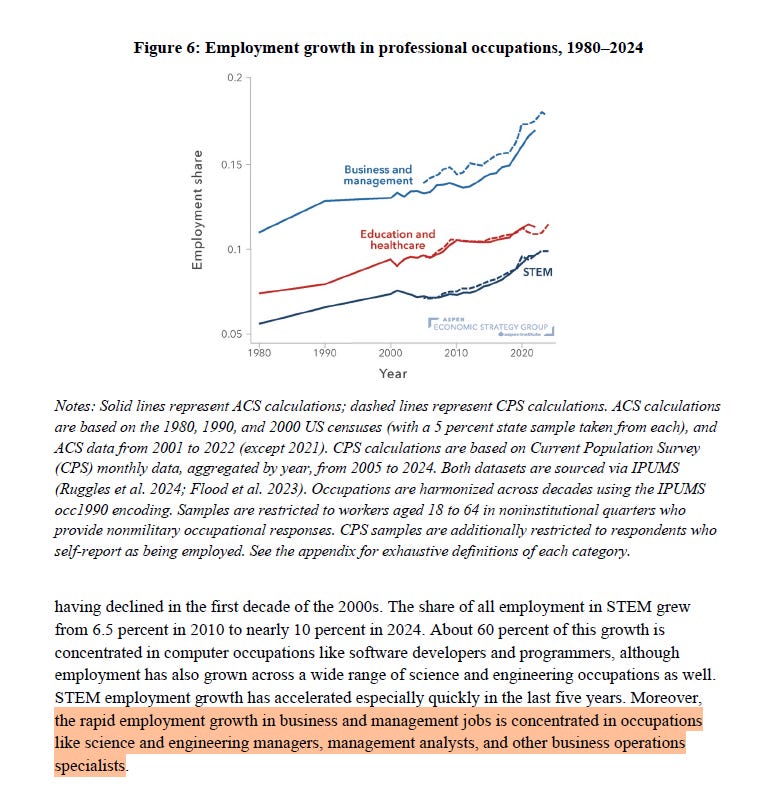

This following a massive rise in investments in IT, software, and R&D:

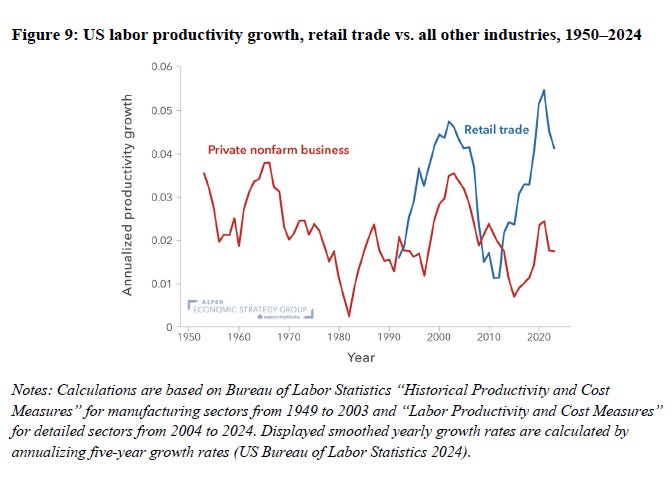

Retail sales have fallen off a cliff:

whereas retail productivity has increased:

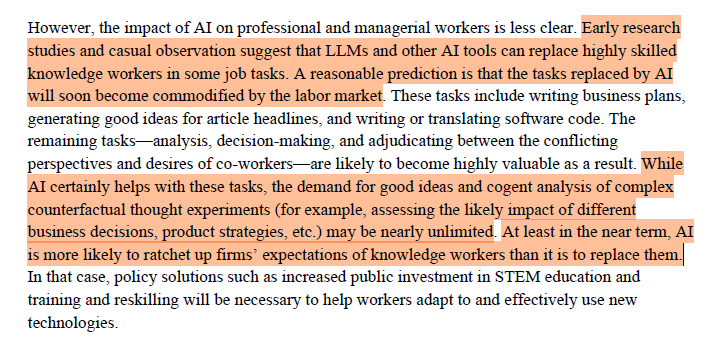

The authors end with a discussion of AI and how it will likely contribute to these trends:

. Interesting stuff. A few thoughts.

I believe Larry Summers is on the Open AI board.

This a pretty significant connection that should probably be mentioned in an academic-ish paper that talks about the potential revolutionary disruption of AI. Dude has a stake in the hype, right?

The authors do a great job unpacking the technological contributions to these changes. They don’t do a great job discussing the policy and institutional contributions. Manufacturing’s decline wasn’t a neutral event. It was, at minimum, a major investment through policies and the state. Furthermore, the decline of retail employment was likely a dual outcome between the rise of private equity (itself a non-neutral event) and the unintended fallout of the ACA (think of Amy Finkelstein’s research on the impact that health insurance tax schemes have on decisions to hire lower or higher paid workers). I think that arguments of “this is just how it is, this is just how technology is going” are pretty shortsighted.

Nevertheless, lots of great information about the long run changes to the labor market.

Just a note to say that I really enjoy reading these. So thank you.